A fundamental methodological flaw in the way we do pro-animal messaging studies

- Animal cruelty based messages are more effective than health or environmental ones

- Is reducitarian messaging effective

TLDR: Messages are often not randomly sampled from the population, and most studies only use 1 example of each message. This means that while studies will generalise to people not in the study, they may fail to generalise to vegan messages that are different to those tested in the study.

How do we convince people to take positive action for animals? This is one of the most fundamental questions that most animal advocacy organisations face. In a sense, it's a question about persuasion. If we want to convince someone to sign up for Veganuary, or support plant-based options in their schools, are we better off appealing to the environmental damaged caused by animal agriculture? Or the health benefits of plant based diets? Or should we highlight the animal cruelty involved in animal products? In the last few years, a plethora of studies have been published, investigating what types of messaging are most effective for animal advocacy campaigns. The problem is, there is strong reason to suspect the majority of them are basically useless.

I reviewed 17 studies/report where researchers compared people's responses to pro animal messages (videos, essays, Facebook ads). The goal of these studies was to establish what types of messages are most effective at encouraging pro animal behaviour, such as reducing meat, or signing petitions. Worringly, a majority of them (71%) use a design that cannot tell the researchers what they want to know. This is because these studies compared 1 specific example of each message type.

Let's say we want to compare the effectiveness of vegan messaging. We want to see which is most convincing: environmental based messaging, health based messaging or animal suffering based messaging. So we gather up some participants and show each of them one of the messages. Then we measure how convinced they are that factory farming is a problem. How many participants do I need for this study? 100? 1000? 10,000? Why not three? Yes. three people. After all, we've only got three messages. So we give each message to one person and then we measure the difference. What an easy study!

Even if you're not a researcher, it's pretty obvious why this is not a good idea. You cannot generalise from 3 people to the entire human population. So why do we generalise from 1 health message, 1 environmental message and 1 animal welfare message to all the possible messages we could come up with?

Let's say I have a friend with a large, well kept beard, and I have 400 women rate his attractiveness from a photograph. I get 400 women to rate a photo of me after a clean shave. Imagine that my friend's attractiveness score averages to 8.5 and mine a lowly 7. Can I conclude that bearded men are more attractive than clean shaven men? Obviously not. I've only looked at 1 example of a bearded man and 1 example of a clean shaven man. They can't generalise. If I get 500 more women to rate each of us, it still won't fix the problem. We'll just get even more sure that I'm the uglier friend. But we won't get any closer to the answer we're after.

In Herchenroeder "Participants watched a brief (~10 minute) video excerpt from the documentary film H.O.P.E. What You Eat Matters by Nina Messinger. In these videos a variety of well-respected experts revealed the effects of meat consumption on either animal welfare (i.e., the inhumane living conditions of factory farmed animals), the environment (i.e., climate change and deforestation), or health (i.e., the increased risk of heart disease, cancer, diabetes). Participants in the control condition watched a brief (~10 minute) video excerpt from the documentary film Voices of Debt – The Student Loan Crisis: Don’t Major in Debt by Michael Porte which discussed the negative effects of student loans on college students and recent graduates." they found that participants who saw the environmental video had higher intentions to reduce meat than the control condition who watched a video about debt, but nether the health messages nor the animal welfare one were effective.

Can we conclude from this study that animal welfare and health messages don't work? NO! Of course not. We can only conclude that the specific health message and the specific animal welfare message used in this study was not more effective than the control. We cannot say that just because this one example of a health message was worse than a control, that all health messages are worse. To do that would be to assume that all health messages are as effective as this one, which is ridiculous. Some health messages are clearly better than others. We'd also have to assume that no message works better than the one they chose.

So if I need 500 participants to generalise, are you saying I also need 500 different versions of my messages?!

Luckily, no. but studies indicate that you should probably have between 8 and 32 examples of each message type. Ideally, each message is seen by more than 1 participant, and each participant sees a random message.

You might be wondering why you might need 100s of participants but far fewer messages. The key difference is in how much they vary. People are very different from each other, so you need lots of people in your study to capture that diversity. Vegan messages probably don't vary nearly as much: all pro-environment messages are kind of similar (all variations on "animal products are bad for the planet"), all health messages are quite similar (all variations on "meat is bad for you") etc. Sure there are many ways to make the environmental argument for veganism (you might focus on water use, deforestation, greenhouse gasses), but they're not as different from each other as people are. If they don't vary much, you don't need as many different examples to get a representative sample.

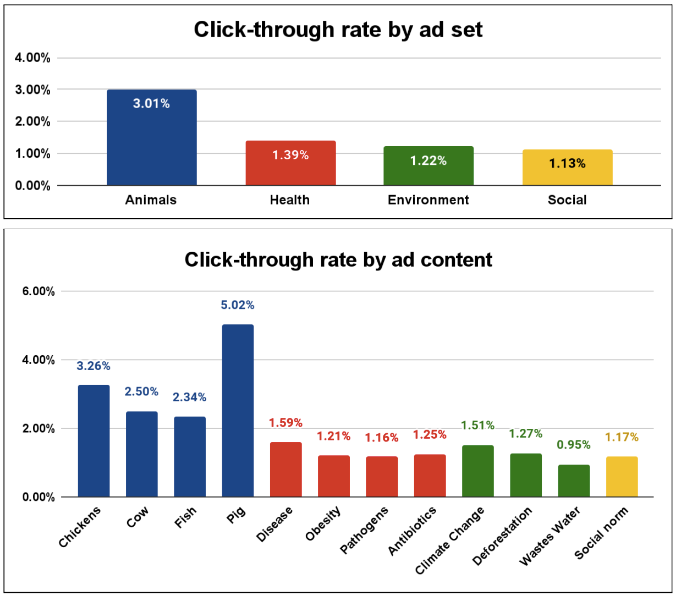

We can see this in data from a collaboration between Bryant Research and Mercy for Animals on the effectiveness of different Facebook ads:

When we look at the 4 health ads, we can see they vary in their performance, from 1.16% to 1.59%. The best performing is 1.3x better than the worse performing. That's not a whole lot, so we may not need 32 environmental ads.

But this graph also shows us the danger of using 1 example of each message. If I used the "wastes water" message, I might have concluded that environmental messages are very ineffective compare to social norms or health messages. If I used the climate change on, I would probably conclude that environmental messages are stronger than social norms or health messages.

Encouragingly, this study finds that of seven different environmental messages, there were no significant differences in their effectiveness at reducing beef consumption. If environmental messages do not differ much in their effects, then we do not need to test a large number of them to accurately compare them to animal welfare messages

Large samples of participants make the problem worse

What make this problem even more insidious is that it gets worse with larger samples of participants. This is because a large sample of participants you become almost guaranteed to find that one message is better than another, so bias in the messaging materials becomes even more concentrated.

Imagine the hypothetical case: Let's assume health messages are truly more effective than environmental messages at converting people to veganism. But we don't know this yet, so we design a study to find out. We give our study to 1 million people from around the world, a truly heroic effort. We give them one of 2 messages: One is some variation of “Go vegan it will improve your health” and the other is “Go vegan it will save the planet”.

Are we guaranteed to discover the “truth” here? Will our study always find that health messages are more effective? What if we unknowingly design a poor health message and a strongly convincing environmental message? In that case, our study will probably show that environmental message are more effective, and our sample size of 1 million means our p-value will be highly significant. In this case, we would wrongly conclude with high confidence that environmental messages are far more convincing than health messages. In reality, all we have learned is that we can be highly confident that the specific environmental message we used is better than the specific health message.

Will this problem be solved by meta analysis?

Technically, the issue of low stimulus diversity could be solved by meta-analysis of many studies. However, it would take an infeasible number of studies to solve this. Aggregating the findings of 2 studies where each only tested 1 message variant is equivalent to a study with only 2 message variants. Extrapolating this, a meta analysis aggregating 16 messaging studies would approximate only a single well-carried out study. This is grossly inefficient.

As well as being inefficient, relying on meta analysis requires all studies to be as similar in methods as possible, which we cannot assume will always be the case. Indeed, the 17 studies reviewed below cannot all be combined: Grummon et al., 2023 measured the effect of messaging on red meat orders at a restaurant, whereas Faunalytics. (2012) measured whether the messaging in the form of a video affected intentions to reduce meat. It is not easy to combine the results of these 2 studies in a meaningful way.

A review of studies comparing different messages to encourage pro animal behaviour

Note this is non exhaustive.

Studies with no message diversity

- Souza et al., 2022 used only 1 vignette for each of environment, animal welfare and health.

- Faunalytics. (2012). What is the Most Effective Veg Outreach Video? Faunalytics.

- Doebel and Gabriel (The Humane League) used 1 example of each pamphlet

- Herchenroeser et al., 2023 used 1 example of each health, animal welfare and environment. Found only environmental messages worked

- Palomo-Velez et al., 2018, suffers from this problem even more than other studies, because studies 1 and 2 used basically the same messages (with minor tweaks). The fact that they had multiple studies makes their findings look more robust, but using the same essays in 2 studies means that they are just doubling any bias.

- Vainio, Irz ann Hartikainen, (2018)

- Lai et al., 2020

- De Cianni (2024) used 1 health and 1 environmental message.

- Ye & Matilla, 2021

- Wolstenholme,

- Ye and Matilla 2022 compared literal and figurative messaging on the environmental impacts of animal agriculture. They only used 1 example of each

- Dijkstra and rotelli, 2022 found only health messages reduced red meat consumption more than the control (animal and environmental did not).

- Grummon et al., 2023 found environmental and health messages reduced red meat orders at a restaurant but animal welfare did not.

- Cisternas et al., 2024 published in cell, 5000 participants, 1 example of each message.

- Fonseca and De Groeve (2025) 3 images.

- Alexander-Haw et al., (2025)

- Xu et al., (2023)

- Mrchkovska et al., (2024)

- Carfora et al., (2025)

- ATT 2023c

Studies with some message diversity

- Bertolotti, et al., (2019) had 2 examples, because they crossed message type (health or wellbeing) with another variable.

- ATT (2023a) study 2 used 2

- ATT (2023b) used 2

- ATT (2025) used 2

Studies with minimally acceptable message diversity

- ATT (2023a) study 1 used 4

- Similarly, Isham et al,. 2022 had 4 dishes (5 in study 2) and varied whether they got a health framing or an environmental framing.

- Animal Think tank, 2025b, study 1, used 6

- Cooney (Humane League labs) avoided the low message diversity problem unwittingly. He compared cruelty to health messaging, but because he also compared a bunch of other things too, participants ended up seeing 1 of 4 slightly different versions of the health argument or animal cruelty argument.

- Not wanting to toot my own team's horn here, but a Bryant study in collaboration with Mercy for animals used 4 versions of each of environmental, health and animals. This isn't perfect, but much better than just 1.

Studies with decent message diversity

- Taillie et al., 2022 . Annoyingly, it only looks at environmental vs health messaging and doesn't include an animal one. It compared 10 different environmental messages and 8 different health messages, but each participant either received all the health messages or all the environmental ones. I think this is fine though: basically each participant's response will be an average (or perhaps sum?) or their response to each message. This effectively means the study is comparing the average effectiveness of the 8 health messages to the average effect of the 10 environmental messages.

- Carfora et al., 2019 had a chatbot message participants on facebook every morning for 2 weeks, giving them environmental and health messages. They compared messages with an informational tone to messages with an emotive tone, and found emotive messages are more effective. In this study participants will have received something like 14 different messages, which similarly to the study above, effectively means their results are the average effectiveness of 14 messages.

- Carfora et al., (2023) every day for 14 days, got a legume based message.

- Similarly, Carfora et al., (2024) showed participants 18 messages per condition, but over 36 days.

- Lin et al., (2024 ) did 8, becuase of a 2 x 2 x 2 design

- Animal Think tank, 2025b, study 2, used 10

- ATT, 2025a used 2 or 10 depending on the test

- This ATT study used 8, 10 or 20 depending on what it tested

- Note however that it's not clear from the Carfora studies that the authors are aware of the problem; it seems their longitudinal design meant they had to have multiple message types, and so they may have dodged the problem unintentionally.

Recommendations and conclusions

- I'm sorry to say, but studies that use 1 or 2 examples of each message only good for 1 thing: telling you which of 2 specific messages is better. They cannot tell you much at all about general trends you can apply to future messages (health vs animal cruelty for example) even if they have large numbers of participants.

- If a study wants to generalise beyond the specific messages used, they should at a bare minimum use 4 examples of each message. For a study to be considered good evidence, it should use more than 8 versions of each message. Each participant only needs to see 1 message, and each message doesn't need to be seen by loads of participants.

- For studies that use multiple versions of each message, ideally they should be analyses according to best practices by using mixed effects models, rather than simply averaging the performance of all message variants together.

- Researchers can structure their studies to allow their results to be easily compared and aggregated. Studies that measure the persuasiveness of messages individually are more comparable than studies that measure relative persuasiveness. For example if a study shows participants 2 messages and asks them to choose which is most persuasive, the results of that study, while useful in isolation, cannot be compared with another study that uses a similar design. On the other hand, if the study showed participants a single message and asked them to rate how persuasive it was on a 7 point scale, we can obtain a measure of the persuasiveness of that message that can be compared to other messages in other studies. Likewise, using standard metrics like Click through rates on social media advertisements (e.g. Bryant et al.,) can allow researchers to compare messages across studies more easily.

- Ideally, someone should do some work to establish how much variance there is in the messages from these studies. This could be achieved by creating a large bank of messages of different types, and testing them all for persuasiveness. Even better would be to get the messages used by the authors in the studies above and test them all at once. Then for each message type (health, animal cruelty, environment etc), we could examine the variance in persuasiveness. If that variance is small, it suggests that a meta analysis of studies will largely solve the problem of message diversity. If the variance is large, we should probably just ignore all current published work and start again.

Further reading

- One of the smartest people who has written about this is Daniel O'Keefe. This paper in particular addresses similar concerns to this article as well as additional issues.

- Judd, Westfall and Kenny, 2012 is a classic paper to read on how to conduct studies where samples of participants rate samples of 'experimental stimuli' (in our case this means message variants). It has been cited over 1300 times at the time of writing.